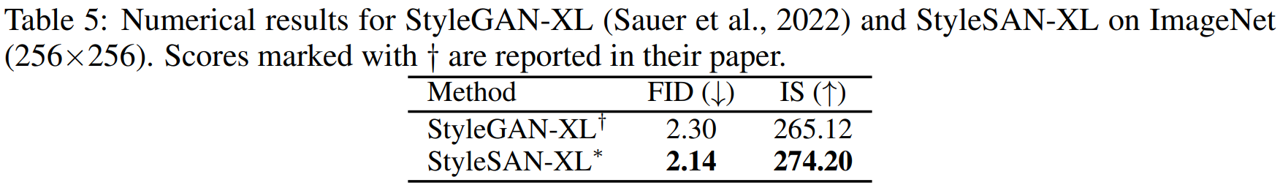

A theoretically sound modification scheme for discriminators, although simple, enhances GAN performance, resulting in a novel class of generative models called Slicing Adversarail Network. Applying SAN to StyleGAN-XL results in SOTA performance on ImageNet 256x256 (FID: 2.14, IS: 274.20).

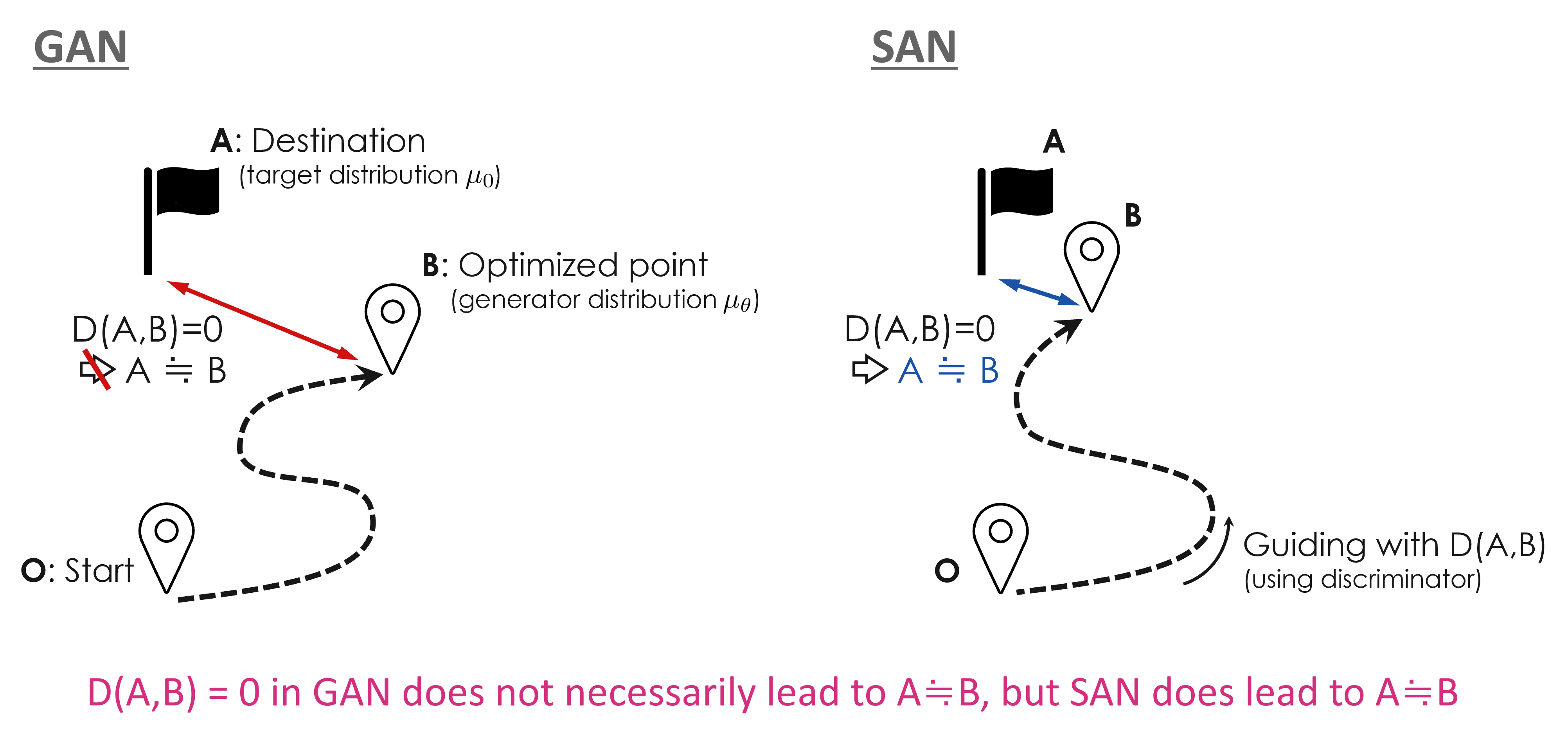

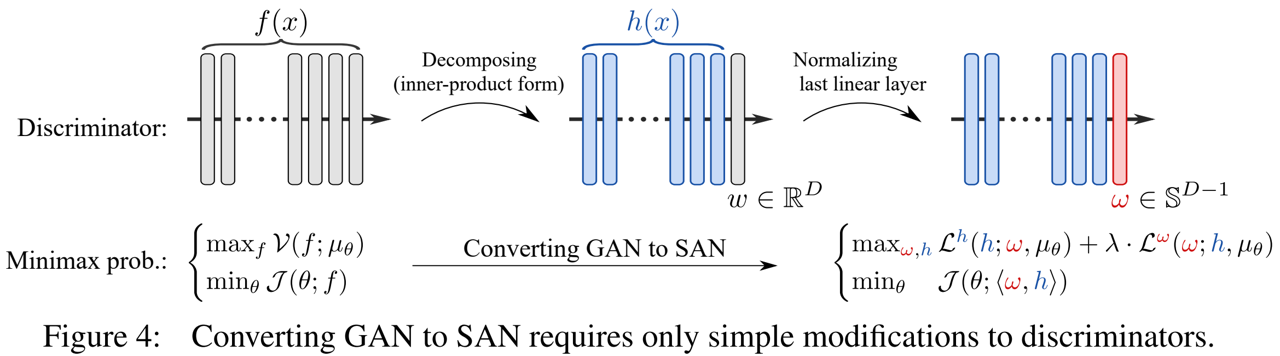

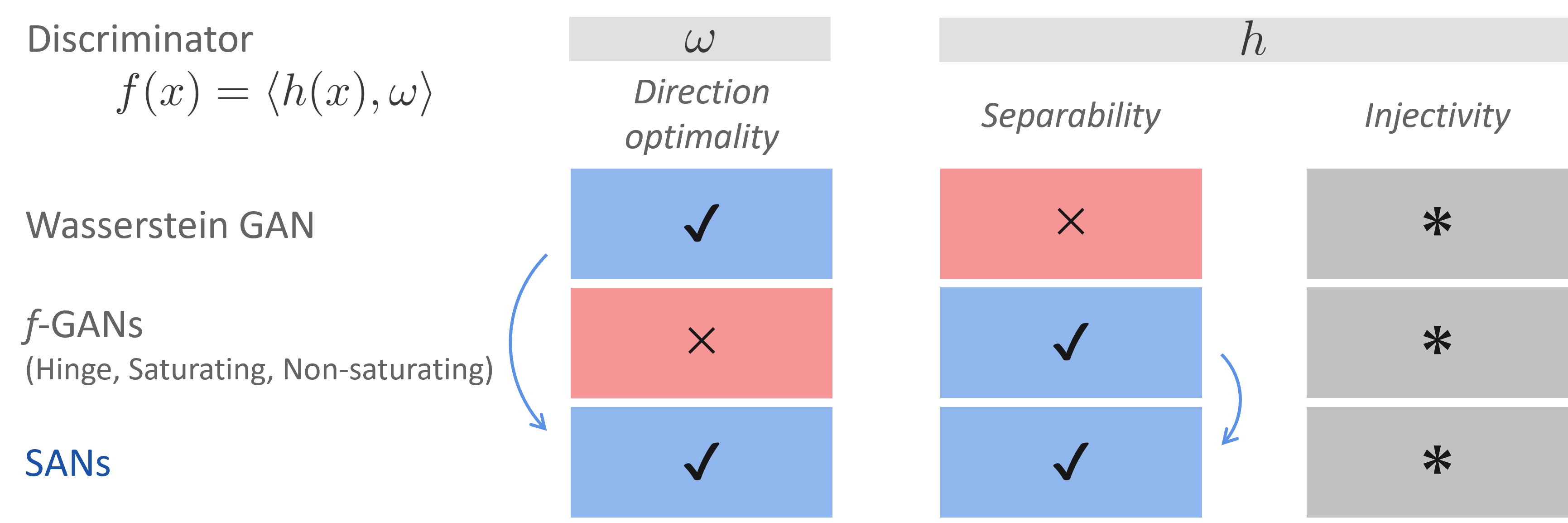

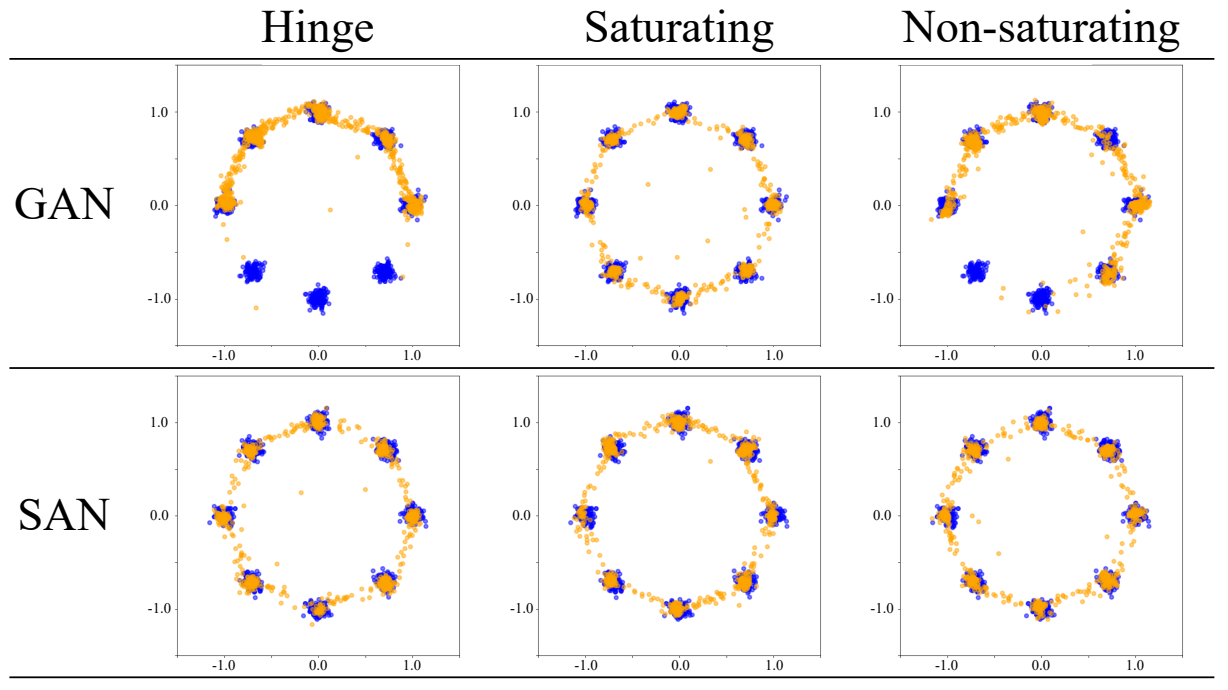

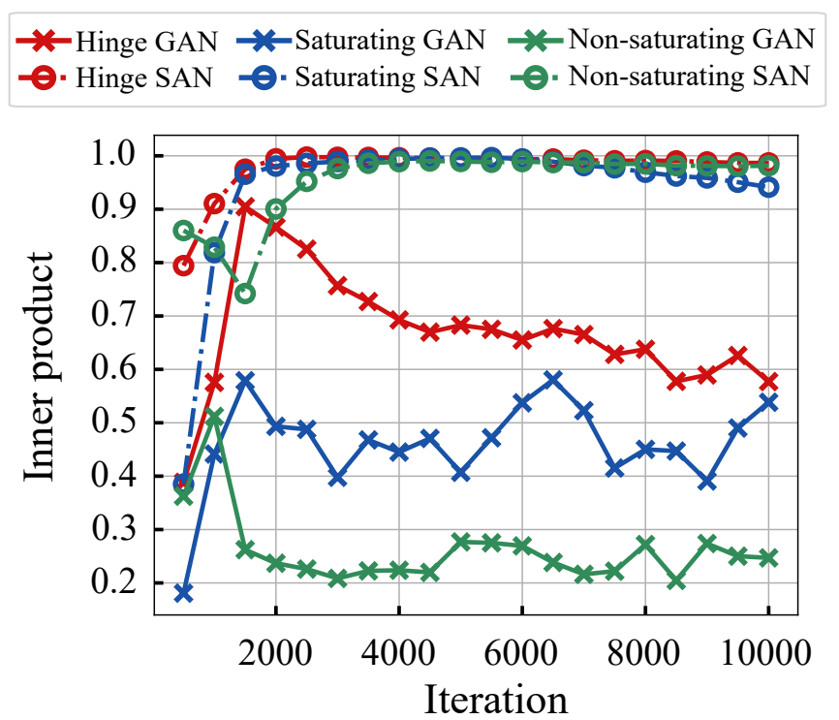

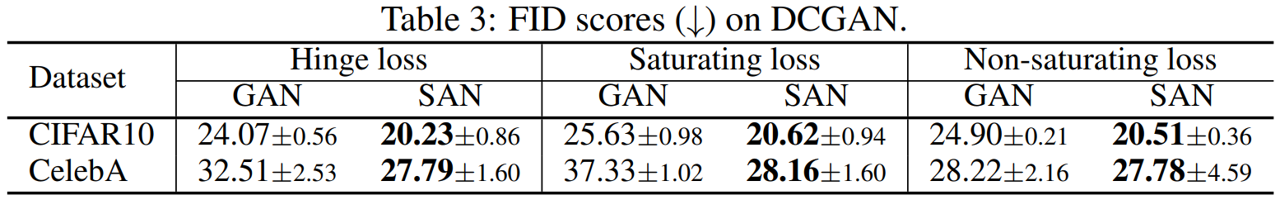

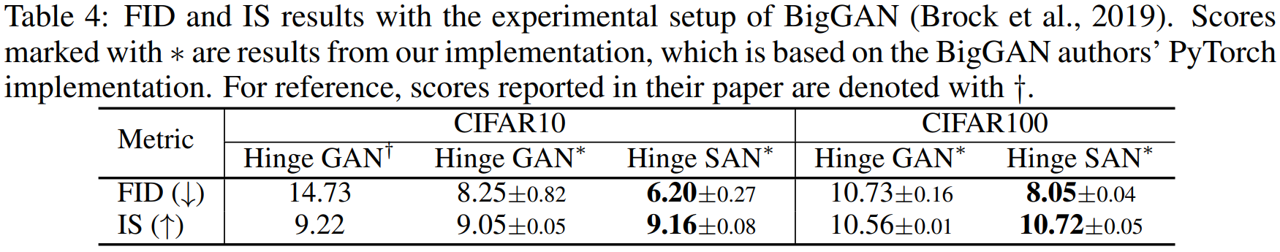

Generative adversarial networks (GANs) learn a target probability distribution by optimizing a generator and a discriminator with minimax objectives. This paper addresses the question of whether such optimization actually provides the generator with gradients that make its distribution close to the target distribution. We derive metrizable conditions, sufficient conditions for the discriminator to serve as the distance between the distributions, by connecting the GAN formulation with the concept of sliced optimal transport. Furthermore, by leveraging these theoretical results, we propose a novel GAN training scheme called the Slicing Adversarial Network (SAN). With only simple modifications, a broad class of existing GANs can be converted to SANs. Experiments on synthetic and image datasets support our theoretical results and the effectiveness of SAN as compared to the usual GANs. We also apply SAN to StyleGAN-XL, which leads to a state-of-the-art FID score amongst GANs for class conditional generation on ImageNet 256x256.

@inproceedings{takida2024san,

title={{SAN}: Inducing Metrizability of GAN with Discriminative Normalized Linear Layer},

author={Takida, Yuhta and Imaizumi, Masaaki and Shibuya, Takashi and Lai, Chieh-Hsin and Uesaka, Toshimitsu and Murata, Naoki and Mitsufuji, Yuki},

booktitle={The Twelfth International Conference on Learning Representations},

year={2024},

url={https://openreview.net/forum?id=eiF7TU1E8E}

}